The Battle-Tested Stack

The Middle East is the proving ground for commercial AI in war — and the legal vacuum around it is not a gap, it is the policy

As I write, the US and Iran have just announced a two-week ceasefire under the Islamabad framework.

The kinetic phase may be pausing. The architecture is not.

There is a sentence in the IISS Middle East Programme’s April briefing that ought to stop anyone reading it cold.

“The Middle East has become a testing ground for AI-enabled military technology, which is then marketed internationally as battle-tested.”

Battle-tested.

The phrase is doing enormous work. It is the marketing language of a commercial sector that has discovered the region’s wars are also the fastest product-validation cycle in the history of defence procurement.

Every target generated, every face matched, every cloud query routed through a hyperscaler’s “landing zone” is, in the same instant, a kill chain and a sales reference.

The two functions are no longer separable.

Forty days into Operation Epic Fury — the US-Israel campaign that began on 28 February 2026 and has now struck more than 13,000 targets across Iran — the question is no longer whether commercial AI providers are inside the kill chain.

They are.

The question is what jurisdiction, if any, can reach them.

I want to make a stronger claim than that, and I want to make it now rather than at the end. The legal vacuum around commercial AI in warfare is not an oversight. It is not a problem of legislative timing.

It is the policy.

Every major commercial AI provider, every defence ministry buying their services, and every host government providing the data-centre real estate has a direct interest in the vacuum continuing to exist. The non-binding declarations are not failed regulation. They are the regulation the parties involved were willing to accept.

That is what I want to work through here.

The infrastructure that did the targeting

Start with the architecture, because the architecture is the argument.

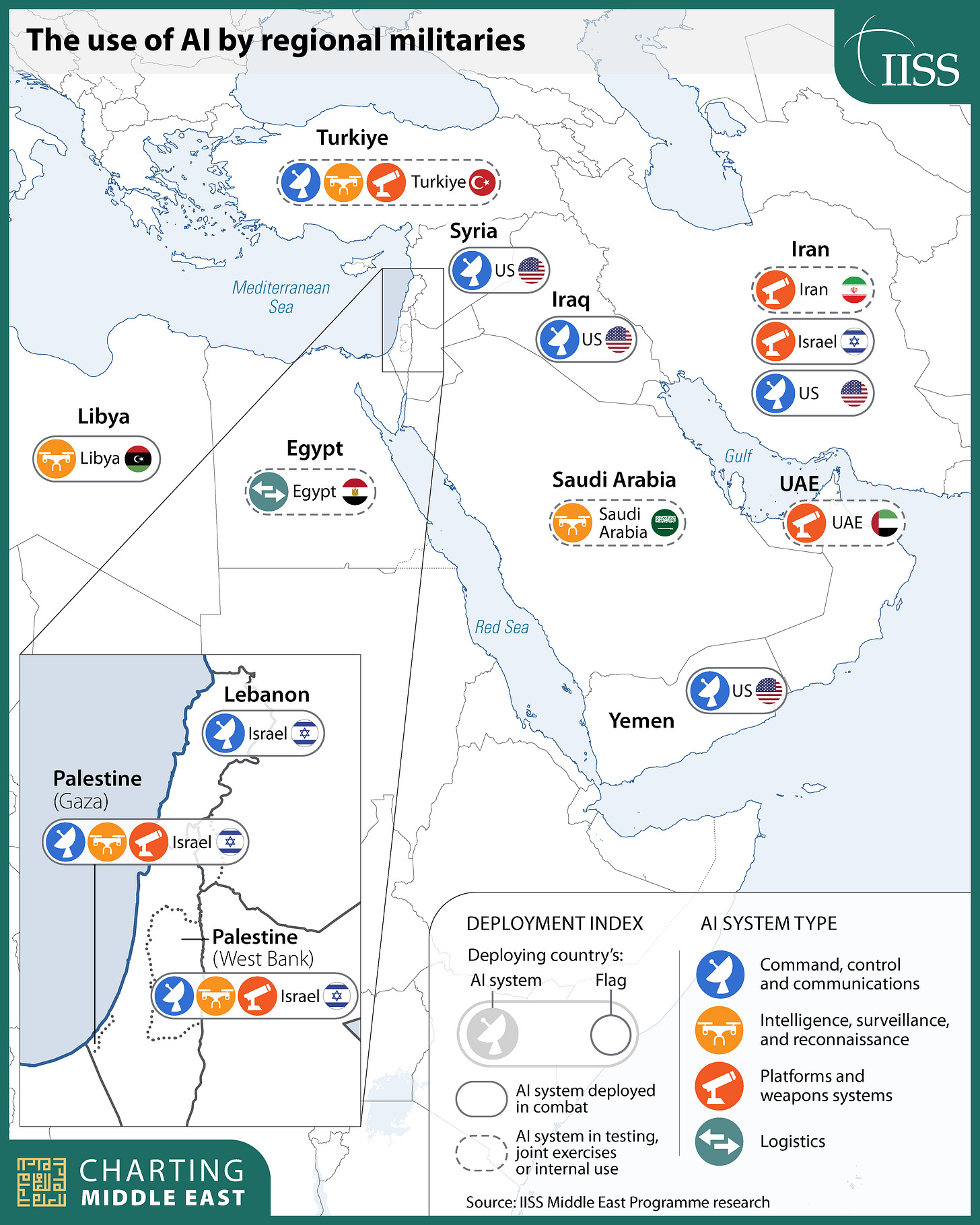

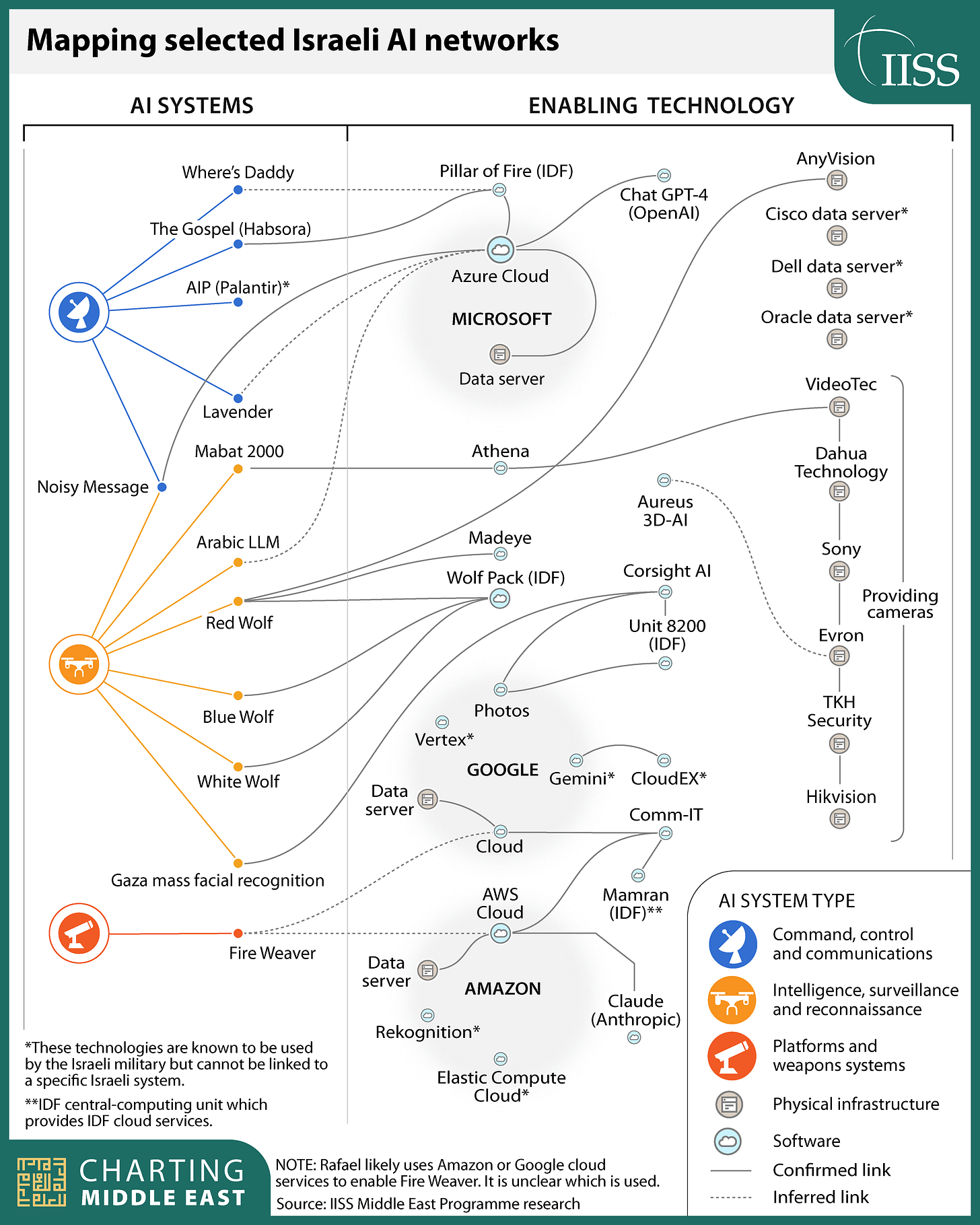

The IISS mapping is the clearest open-source picture yet of how Israeli AI-enabled targeting actually works at the stack level.

At the top sit the named decision-support systems — Lavender, The Gospel, Where’s Daddy, Pillar of Fire, Fire Weaver. Beneath them is a layer of facial-recognition and biometric infrastructure: Red Wolf, Blue Wolf, White Wolf, the Wolf Pack database, Corsight AI, an Arabic LLM developed inside Unit 8200. Beneath that sits the enabling infrastructure proper — Microsoft Azure, Google Cloud, AWS — and on top of those clouds run the model APIs: OpenAI’s GPT-4, Google’s Gemini and Vertex, Anthropic’s Claude, hosted via Palantir’s Artificial Intelligence Platform on AWS.

This is not a metaphor.

This is the literal architecture diagram. The target list that sent 37,000 names into IDF rotation in the first weeks of the Gaza war was generated, processed, and distributed across infrastructure provided by companies whose terms of service nominally prohibit exactly that use. The Maven Smart System that the US Department of War used to compress Operation Epic Fury’s targeting cycle to a thousand strikes in twenty-four hours runs Anthropic’s Claude on AWS servers.

The mechanism is the point.

In every previous generation of military procurement, the supplier handed over a system and walked away. The buyer owned the platform, owned the doctrine, owned the consequences. With cloud-hosted AI, none of that is true.

The model continues to live on the provider’s infrastructure. The training data continues to be the provider’s intellectual property. The terms of service continue to bind, in theory, the buyer’s behaviour. The provider is not selling a product. The provider is a continuous participant in every inference the buyer makes — for as long as the contract runs and the API keys work.

Hold that fact for a second. It changes what comes next.

Iran read the architecture diagram

When Iranian forces targeted AWS data centres in the UAE and Bahrain in the opening weeks of Epic Fury, the Western press read it as escalation theatre. Soft targets, symbolic value, deniable enough to avoid full Gulf-state retaliation. That reading is wrong, and I think the people writing it know it is wrong.

The Iranian statement was explicit.

The data centres were struck because of their role in “supporting the enemy’s military and intelligence activities” — a formulation that almost certainly refers to the hosting of Palantir’s AIP, with Anthropic’s Claude integrated, on AWS infrastructure. Iran was not improvising a justification. Iran was reading the same architecture diagram everyone else can now read.

This is the moment the abstraction breaks.

For the better part of two decades, the cloud has been sold to enterprise customers as a layer of indifferent plumbing — geographically distributed, redundantly mirrored, politically neutral. The point of the cloud was that the customer did not have to think about where the servers were. Iran has just demonstrated, with kinetic force, that the geography of the servers is now a targeting question.

If your AI inference runs on a hyperscaler’s data centre in a Gulf state, the data centre is part of the conflict. The host government inherits a piece of the conflict by virtue of letting the rack sit on its territory. The hyperscaler inherits a piece of the conflict by operating it. The model provider whose API the inference traversed inherits a piece of the conflict by serving the call.

Everything leaks into everything else.

Cloud into kill chain, kill chain into sovereign risk, sovereign risk back into the price of operating a data centre in Manama. There is no version of this story in which the commercial enablers can claim distance.

The architecture they built does not permit distance.

The throughput problem

The standard objection to writing about AI in warfare is that the technology is being oversold by both its boosters and its critics, that the actual battlefield use is more constrained than the marketing suggests, and that the human-in-the-loop is still doing most of the work that matters.

I take the objection seriously. It is mostly wrong, and the reason it is wrong is throughput.

The Gospel reportedly generates targets at a rate of roughly one every few seconds. Lavender rates individuals against suspected affiliation with armed groups and produces ranked lists. Where’s Daddy tracks individuals’ locations ahead of strikes. The compression of decision time is the entire point of integrating these systems. The “human verification” that proponents cite as the safeguard exists, but it exists at a tempo set by the machine. An analyst presented with a ranked list of three hundred names and a fifteen-minute window to clear them is not verifying. The analyst is rubber-stamping. Institutional bias toward AI-generated assessments — the well-documented tendency of human reviewers to defer to machine output under time pressure — is not a bug introduced by careless implementation. It is the operating principle of the system.

Operation Epic Fury makes this visible at the campaign scale.

Five weeks in, US intelligence assessments leaked to CNN suggest the coalition has degraded only about half of Iran’s missile and drone arsenal despite striking more than 13,000 targets. Many of the strikes that planners initially recorded as successful turned out to be partial. Tunnel entrances were hit; the hardened facilities behind them were not. Decoy launchers absorbed strikes intended for real ones. The IISS briefing notes that many of the targets hit in Iran have been civilian, including a school and healthcare and residential facilities. The Soufan Center brief from 6 April notes Iran has been able to reopen bombed tunnel entrances within hours.

The civilian harm in these campaigns is not happening despite the AI integration. It is happening because of it. You cannot generate a thousand targets in a day and verify each one to the standard the laws of armed conflict require. The arithmetic does not work. The system is operating exactly as designed, and the design was never compatible with proportionality.

The throughput is the threat. That is the sentence I would put on the wall.

Project Nimbus, or the contract that ate the terms of service

The most revealing document in the entire commercial-enabler story is the Project Nimbus contract.

Project Nimbus is the $1.2 billion deal under which Google and Amazon provide cloud infrastructure to the Israeli government, including the Ministry of Defence. The terms — pieced together by investigative reporting and reviewed by IISS — contain provisions that, on inspection, dismantle every governance mechanism the providers nominally have over their own technology.

The contract reportedly prohibits Google and Amazon from suspending Israel’s access to their services, even if the providers find that their own terms of service have been violated. The contract reportedly limits Google and Amazon’s visibility into how Israeli government entities are actually using the cloud. The contract reportedly contains a “winking mechanism” — a clause requiring Google and Amazon to covertly signal to Israel if any third country has ordered them to hand over Israeli data. Both companies have denied the winking-mechanism claim. Neither has produced the contract.

Read that sequence again.

Two American hyperscalers signed a contract that bars them from enforcing their own published policies against their largest single client in the region, blinds them to the use of their own infrastructure, and obliges them to subvert the legal process of any third country that tries to assert jurisdiction over data held on their servers.

If those terms are accurate, Project Nimbus is not a service contract.

It is a structured surrender of corporate governance to a state customer, with a built-in mechanism to evade external oversight. And it was signed voluntarily, by two companies whose published human-rights commitments still cite the UN Guiding Principles on Business and Human Rights. The principles state that business enterprises should treat the risk of contributing to gross human-rights abuses as a legal compliance issue. The contract treats it as a counterparty risk to be priced and absorbed.

International humanitarian law binds states. States can bind commercial entities, in turn, by writing those obligations into domestic law and by enforcing universal jurisdiction for international crimes. Sweden has done this — there is a trial currently running of two former Lundin Oil executives for aiding and abetting war crimes in Sudan. The pathway exists.

What does not exist is the political will to use it against the commercial AI sector. What Project Nimbus shows is that the sector itself has been busy contractually pre-empting that pathway before it can be walked.

The quiet rewrites

The other thing the providers have been doing, while the kinetic campaigns have escalated, is rewriting their own rulebooks.

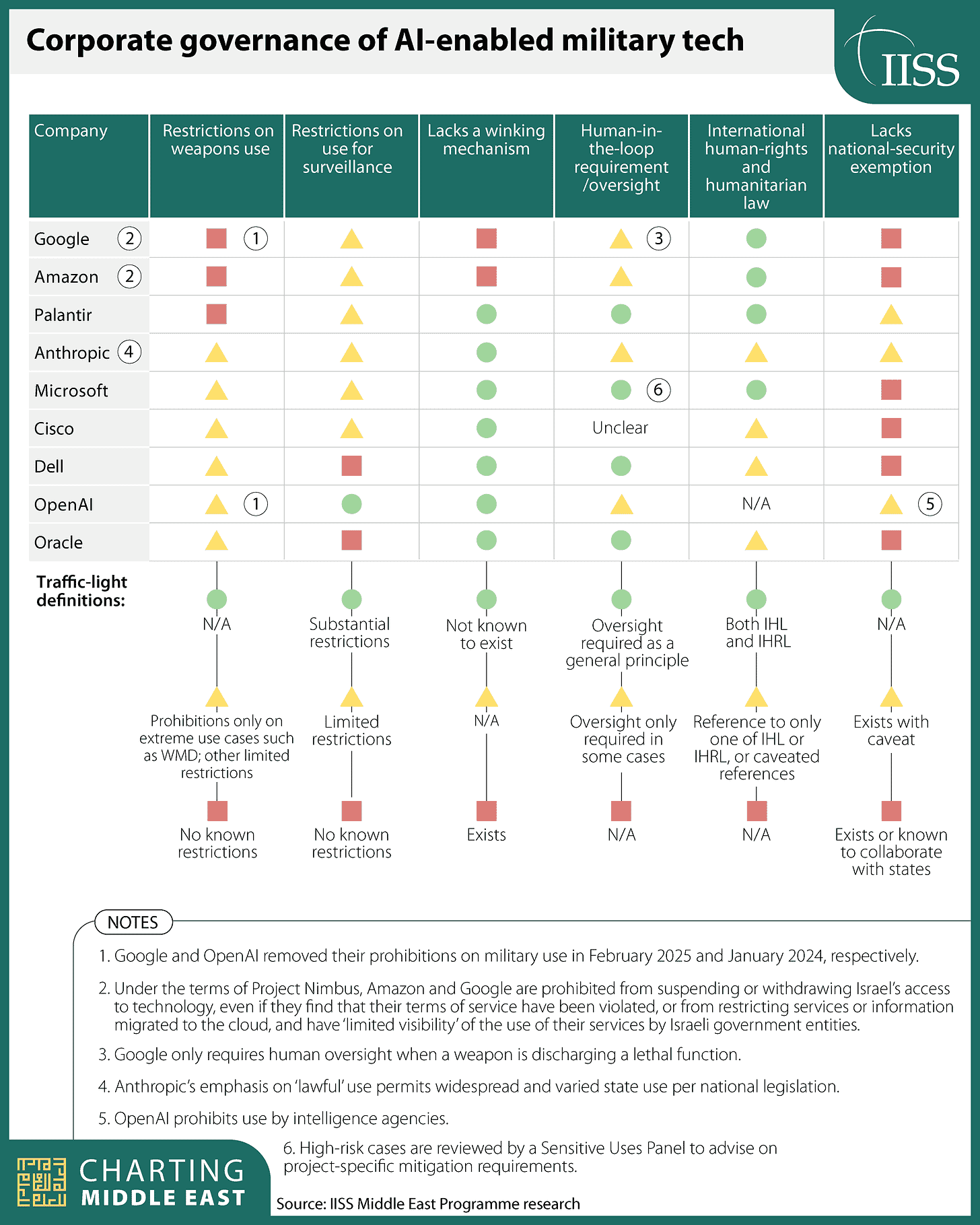

OpenAI removed its prohibition on military use in January 2024.

Google removed its equivalent prohibition in February 2025.

Anthropic’s policies, on the IISS reading, now permit “lawful” state use under national legislation — a formulation that delegates the entire question of permissibility to whichever state happens to be wielding the API key. Microsoft retains a Sensitive Uses Panel that reviews high-risk cases and advises on project-specific mitigation, which is closer to a real governance mechanism than what most of its peers offer, though the panel’s findings are not public and its recommendations are not binding on the rest of the company.

The pattern across the IISS corporate-governance matrix is what matters.

On weapons-use restrictions, most providers have moved from “no known restrictions” to “limited restrictions” to “prohibitions only on extreme use cases such as WMD.” On surveillance-use restrictions, the entire row is amber or red. On the existence of a winking mechanism, three providers — Google, Amazon, and Microsoft — sit in the “exists” or “exists with caveat” cell. On national-security exemptions, the same three sit in the “exists” cell.

The direction of travel is uniform.

Each individual rewrite was justified at the time as a clarification, a modernisation, a removal of language that had become obsolete. Stacked up, they trace the contour of an industry that has decided national-security work is the commercial trajectory it wants and is removing every published constraint that might slow the trajectory down. None of these changes required a new law. None of them triggered a meaningful regulatory response.

This is the part where I think a lot of the policy commentary still gets the question wrong.

People keep asking when the law will catch up.

The law is not behind. The law is exactly where the commercial and state actors with the most leverage want it to be.

Where the binding pressure actually comes from

If the legal vacuum is the policy, then the question is not what new instrument might fill it.

The question is which existing pressure points can be used to apply force from the outside. The Microsoft case is the closest thing to a template.

The external investigation that found Unit 8200 had stored mass-surveillance data on Azure in violation of Microsoft’s terms — including intercepted Palestinian phone calls — is the first hard precedent that a commercial AI provider can be forced, by external pressure, to cut off a specific state customer for specific terms-of-service violations. Microsoft terminated Unit 8200’s access. It did not terminate the rest of its Israeli government business. The legal-advocacy collective that has now formally notified Microsoft of its potential civil and criminal liability for service provision to Israel understands that the unit-level termination was a partial victory and that the campaign is going to keep escalating.

That campaign is the only governance mechanism currently functioning at the speed the technology is moving.

Not the treaties. Not the declarations. Not the corporate ethics boards.

The slow accumulation of evidentiary records — like the IISS network diagram — that make it impossible for any future court, anywhere, to accept the claim that the providers did not know what their infrastructure was being used for. The Irish Council for Civil Liberties has petitioned the Data Protection Commission. Microsoft shareholders filed a proposal to investigate human-rights due diligence. The Sudan precedent in Sweden is active.

Stacked, they describe a curve that bends in one direction.

The vacuum is portable

Two things to flag for anyone reading this with a portfolio rather than a policy brief in their hands.

The commercial AI providers are now carrying a category of legal and reputational risk that their disclosures do not yet reflect and their valuations almost certainly do not price. The first major civil judgment against a hyperscaler for cloud-enabled targeting in an active conflict will reprice the sector on the day it lands. I do not know when that judgment comes. I know the brief is being written.

And the host-government risk is real.

Every data centre an American hyperscaler operates in the UAE, Bahrain, Saudi Arabia, or Qatar is, after the Iranian strikes, a piece of dual-use infrastructure under the laws of armed conflict. The host governments have not yet had to think hard about what that means for their own legal exposure and their own physical security. They will.

The IISS briefing closes with a sentence about the Middle East as a testing ground, and the technologies then being marketed internationally as battle-tested. The reverse implication is the one that ought to register hardest. If the region is the testing ground, the rest of the world is the addressable market. The architecture the providers have built for one set of clients is the architecture they will sell to every other client who asks. The contracts the providers have signed in the Gulf are the contracts they will sign in every other jurisdiction that demands the same terms.

The vacuum is portable. That is the part that should keep you up at night.

I had no idea cloud infrastructure was this deep in the kill chain. The throughput part is nuts...a thousand targets a day and 300 names cleared in 15 minutes. At that speed, the human in the loop is basically just a checkbox.

Ukraine was the testing ground for drones; now the Middle East is the testing ground for AI targeting. “Battle-tested” is basically a new product label.

What really got me is that the cloud stopped being neutral the moment Iran read the architecture diagram and started hitting data centers. Corporate and state interests aren’t just aligned anymore...NOW they’re literally running on the same servers. And if this is what we can see in the Middle East, I have to assume China’s quietly building the same stack on its side.

Really sharp piece, Riko.

Riko: That's a very informative essay, thank you. I had no idea. As I get older I get more and more evidence that I have no idea what I'm trading, or who is on the other side of my trade. Knowing that has made me a better risk manager because it diminishes my "faith" in crystal balls. Cheers, Victor