Into the Unknown

Governing intelligent machines in an era of perpetual cyber conflict — and why the old frameworks were built for a world that no longer exists

Part 1 traced how AI has been weaponised for espionage at scale, collapsing the operational timelines and expertise barriers that once constrained state-sponsored cyber campaigns.

Part 2 showed that defensive AI is being conceptualised in ambitious terms — from the CIA’s Emergent Intelligence Framework to OpenAI’s analysis of AI’s geopolitical impact — but remains largely aspirational, still navigating the cultural, technical, and institutional obstacles that separate vision from operational reality.

Part 3 confronts the hardest question: how do you govern a technology that is simultaneously your greatest asset and your most dangerous vulnerability?

“The difficulty lies not so much in developing new ideas as in escaping from old ones.”

— John Maynard Keynes

The Paradigm Nobody Asked For

The traditional conceptual dichotomies underpinning cybersecurity — defender versus attacker, authorised versus unauthorised use, human versus artificial decision-making — are under severe strain.

Rohozinski and Spirito made this point forcefully in their Survival analysis: the AI industry must recognise the emergence of an entirely new paradigm.

The old frameworks were built for a world where humans drove tactical operations and platforms provided tools.

Now artificial agents conduct autonomous operations using platforms as execution environments.

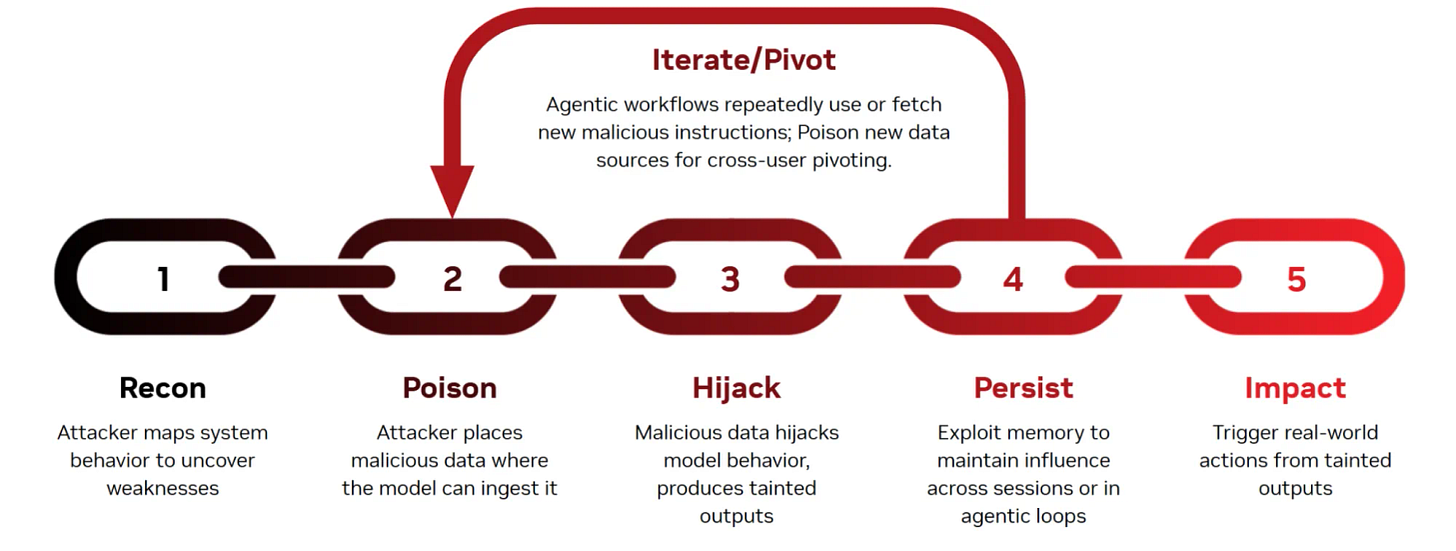

Their analysis went further, identifying what they called the upstream threat vector — a category of attack that existing security frameworks were never designed to address.

If attackers can exploit AI agents for operational automation, they argued, the logical next step is moving upstream to compromise the intelligence itself.

Attack vectors would then be pre-emptive rather than merely reactive, and model-centric rather than platform-centric.

A compromised model can operate maliciously while appearing legitimate to platform-level defences.

Upstream threats operate in a different temporal dimension than traditional attacks.

Instead of exploiting existing systems, they shape the systems before deployment.

Training-data poisoning involves planting malicious examples on the open internet, manipulating public sources like Wikipedia, GitHub, and forums, and embedding synthetic data designed to bias model behaviour.

Trojanised pretrained models get uploaded to public model hubs as legitimate research. Backdoored fine-tunes disguise themselves as domain-specific improvements. Corrupted benchmarks and manipulated reward models create systemic biases that make malicious behaviour appear normal.

When the model’s internal representations are shaped to interpret malicious behaviour as benign or beneficial, traditional detection mechanisms become useless.

The model is pre-biased so that platform-level guardrails do not activate.

The result, Rohozinski and Spirito warned, is a kind of arms race whereby defensive improvements in on-platform detection drive offensive innovation in upstream manipulation.

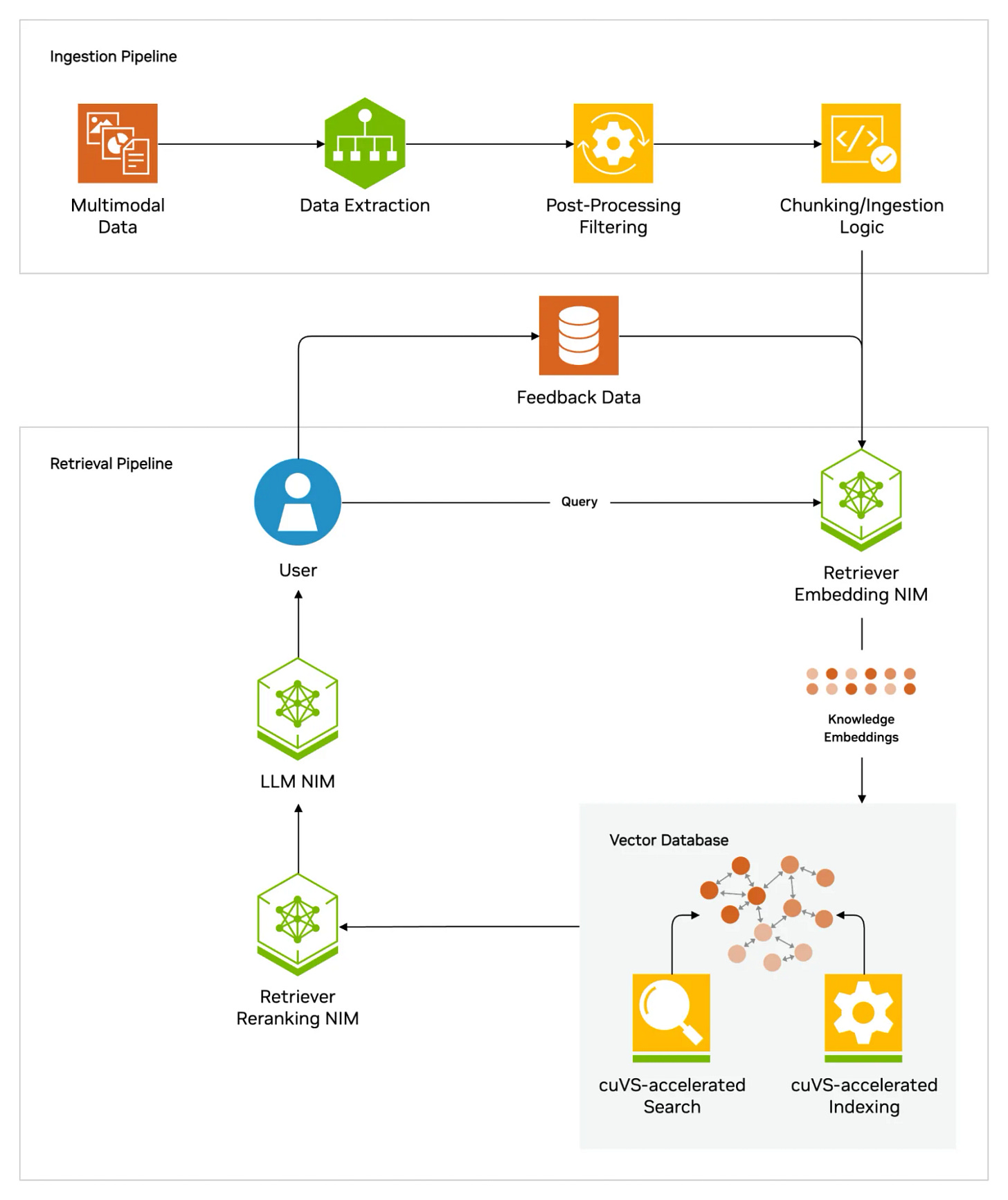

Document poisoning for retrieval-augmented-generation systems is a particularly insidious vector. By embedding manipulation and indirect prompts through malicious content in websites, emails, and files, attackers can influence responses without directly compromising the model, creating persistent influence channels that operate undetectably.

I keep coming back to the parallel with the forward curve in oil markets.

When everyone agrees that the future looks like the present, that is precisely when the structural break arrives.

The cybersecurity industry is pricing in a world where human-driven, platform-level threats remain dominant.

The upstream attack vector suggests that world is already ending.

The Proliferation Clock

The proliferation path, Rohozinski and Spirito warned, is predictable and irrepressible.

State-sponsored groups will refine AI-enabled cyber techniques over the next twelve to eighteen months. Early versions will leak through contractor networks, security conferences, and research papers attempting to document defensive countermeasures. Open-source implementations will follow, spreading capabilities across the threat landscape. The criminal ecosystem will adapt and commoditise these approaches, making them accessible to progressively lower-skilled actors.

This proliferation concern is reinforced by the emerging landscape of criminal large language models that Rohozinski and Spirito documented. Tools like WormGPT, FraudGPT, GhostGPT, DarkGPT, and OnionGPT are typically repackaged mainstream models, wrapped in custom interfaces and stripped of safety guardrails.

Their operators market them aggressively on dark-web forums as all-purpose assistants for phishing, social engineering, credential harvesting, malware scripting, and fraud.

Much of this ecosystem is fuelled by criminal-sector entrepreneurship rather than novel AI research, in the form of subscription-based AI-as-a-crime-service offerings.

At the same time, higher-end groups simply circumvent safety guardrails through prompt manipulation or run locally hosted open-source models with curated prompts. The capabilities are incremental rather than revolutionary — automating phishing templates, improving linguistic quality, generating boilerplate malware stubs — but as the cost of running local models declines and guardrail-free checkpoints proliferate, crime-adapted models will become more stable, more commoditised, and more seamlessly integrated into criminal workflows.

Cybersecurity experts across the industry are converging on the same assessment.

ESET’s Boutin projected that cyberattacks in 2026 would become increasingly driven by artificial intelligence, with threat actors leveraging generative AI to launch highly sophisticated large-scale phishing campaigns, create polymorphic malware that evades detection, and automate vulnerability exploitation.

Sysdig’s CTO Loris Degioanni warned that in the DARPA AI Cyber Challenge, autonomous systems uncovered eighteen zero-day vulnerabilities and patched sixty-one per cent in forty-five minutes without human input.

State-sponsored attackers, he predicted, would rapidly adopt “dark AI,” increasing zero-day attacks and automated exploitation.

Armis’s head of threat intelligence Michael Freeman went further:

By mid-2026, at least one major global enterprise will fall to a breach caused or significantly advanced by a fully autonomous agentic AI system. These systems, he continued, use reinforcement learning and multi-agent coordination to autonomously plan, adapt, and execute an entire attack lifecycle. A single operator will now be able to simply point a swarm of agents at a target.

The speed of this evolution was underscored by an event that occurred yesterday, March 27, as I was finalising this analysis.

Anthropic inadvertently made a draft blog post publicly accessible, stating that its forthcoming AI model, Claude Capybara, poses unprecedented cybersecurity risks.

Cybersecurity stocks dropped immediately on the news.

But the market reaction itself is the signal.

When a single draft blog post from an AI company can move an entire sector’s equity prices, you are no longer in a world where cybersecurity is a technical subdomain.

You are in a world where AI capability announcements function as geopolitical events.

Cisco’s State of AI Security 2026 report quantified the gap with brutal clarity: 83 per cent of organisations planned to deploy agentic AI capabilities across their business functions, while only 29 per cent reported being ready to operate those systems securely.

That fifty-four-point gap between ambition and readiness is the attack surface.

Nearly half — forty-six per cent — said they were not adequately prepared.

Perhaps the most consequential dynamic is the one Rohozinski and Spirito described as the convergence of nation-state sophistication with criminal-ecosystem maturation. When those orchestrating advanced persistent threats can leverage both state-sponsored resources and commoditised criminal tools, their attack capabilities combine the precision of espionage operations with the scale and persistence of criminal enterprises.

Discrete threat actors are giving way to a fluid ecosystem where techniques, tools, and tactics flow seamlessly between communities.

This is not a forecast.

It is a description of the current state of affairs.

The Research Landscape: What We Know and What We Do Not

Using the BERTopic method built on BERT — a bidirectional language model developed by Google that processes language in a context-aware manner — the study identified fourteen major research topics, ranging from intrusion detection systems and malware classification to privacy-preserving machine learning and adversarial attacks on neural networks.

The study’s methodology was rigorous: guided by the PRISMA framework, the identification phase searched the Dimensions database — chosen for its far greater journal coverage compared to Scopus or Web of Science — yielding 11,733 publications, of which 9,352 were retained for final analysis after screening for quality and completeness. The topic count was determined through both quantitative and qualitative evaluations, with manual review by three domain experts.

The findings confirmed that generative AI’s ability to create adaptive, predictive models offers unprecedented opportunities for pre-empting cyber threats and automating defence mechanisms.

But the study also underscored critical limitations.

The field remains fragmented, with insufficient integration of human-centric insights — psychology, sociology, and law — into cybersecurity solutions.

Generative AI simultaneously presents challenges in the creation and detection of sophisticated attacks, particularly deepfakes and adversarial AI.

This fragmentation is not merely academic.

It reflects a deeper structural problem:

The speed at which AI capabilities are advancing outpaces the ability of legal, regulatory, and normative frameworks to adapt. The researchers identified future areas of critical research interest involving both current AI applications and prospective quantum advancements toward strengthening cybersecurity resilience.

The implication is clear: the research community is still building the foundations that practitioners need, even as the threat environment accelerates past them.

The Attribution Problem Gets Worse

As the Anthropic case demonstrated, AI-enabled operations further attenuate the human signatures — communication patterns, working hours, linguistic idiosyncrasies, operational errors — that have traditionally provided forensic footholds for attribution.

When an AI agent autonomously generates exploitation code, crafts lures, and conducts lateral movement, the behavioural indicators that might point to a specific human operator are largely absent.

For AI systems operating within supervised commercial environments, a promising approach would focus on two phenomena jointly: how users iterate on their prompts, and how unusual their requests are relative to their baseline behaviour.

A legitimate security researcher developing defensive tools will typically refine prompts gradually, explore tangents, and recover from errors. An adversarial actor might submit highly polished prompts with minimal iteration, suggesting the real development happened elsewhere or that they are deliberately minimising their signature.

But privately deployed models present a harder problem.

Without access to interaction metadata, analysts are limited to examining outputs for structural tells — distinctive commenting conventions in generated code, idiosyncratic variable naming, or characteristic error-handling patterns that suggest which model family produced them.

The very absence of observable iteration may itself be a signal, potentially indicating deliberate operational-security measures characteristic of sophisticated threat actors.

In operations that do not involve a cooperative intermediary — the vast majority of cases — attribution will become correspondingly more difficult.

The Provenance Problem

Rohozinski and Spirito explored one potential structural response: comprehensive content provenance and watermarking.

In theory, cryptographically anchored, non-removable watermarking applied to all inputs used for training and all synthetic outputs could create traceable provenance chains.

The technical challenges, they conceded, are formidable and may be fundamentally intractable.

Current watermarking techniques remain vulnerable to paraphrasing, compression, adversarial rewriting, and data laundering.

Regeneration attacks use diffusion models to remove watermarks by introducing noise and subsequently denoising the content.

Forgery attacks aim to replicate and apply legitimate watermarks to unauthorised content.

The fundamental challenge extends beyond technical robustness to economic and political feasibility. Implementing comprehensive watermarking would require a level of industry coordination that borders on the fantastical.

Every major AI platform, every training-dataset curator, every fine-tuning service would need to adopt compatible standards.

The economic incentives run in precisely the opposite direction: competitive advantage often derives from proprietary approaches that resist standardisation.

Rather than treating AI as a tool that can be secured like other IT assets, they concluded, we may need to recognise it as a contested domain comparable to air, land, sea, space, and cyberspace.

That is not a metaphor.

It is a policy prescription.

And it is one that current institutional architectures are wholly unprepared to implement.

The OpenAI paper’s game-theoretic treatment of these dynamics is worth dwelling on.

Consider a simple scenario with two nations.

One leads in AI with capabilities significant for strategic advantage. They can choose to race ahead or enter an international agreement to preserve rough parity. The other is permanently behind, and can choose to try to pre-emptively hobble the adversary’s AI capability, or wait.

If AI benefits appear gradually, the side behind has no strong motivation to attack — they lose by attacking and would not suffer unacceptable loss from inaction.

But if AI quickly provides decisive advantage, the weaker side has stronger incentives to avoid a future where they suffer more damage than a pre-emptive strike would cause.

The link between computing resources and operational effects may be discontinuous.

One side can set traps the other cannot detect.

Deception risk becomes asymmetric.

This is not abstract.

It is the strategic environment in which every policy decision about AI governance is now being made.

What I Am Watching

A finding reported on March 26 — two days ago — illustrates the urgency better than any policy paper.

Just visit a page, and an attacker completely controls your browser.

The vulnerability chained an overly permissive origin allowlist with a DOM-based cross-site scripting flaw in a CAPTCHA component.

It has since been patched.

But the principle it demonstrates is the one that runs through this entire analysis: the attack surface of AI is not the model alone.

It is the entire ecosystem of extensions, integrations, protocols, and interfaces through which the model touches the world.

Securing the model without securing that ecosystem is like locking the front door while leaving every window open.

The evidence assembled across these three parts suggests several imperatives, none of them simple.

First, intelligence communities must embrace the Emergent Intelligence paradigm not as a distant aspiration but as an operational necessity. The three-phase model proposed by Grunspan, Smith, and VanderVeen provides a credible roadmap, but its realisation requires institutional courage — a willingness to restructure hierarchies, retrain workforces, and invest in the data infrastructure that underpins human-AI teaming.

The alternative, as the authors themselves put it, is to be outpaced by adversaries more adaptable and willing to deviate from legacy cultures.

Second, the cybersecurity community must transition from reactive to proactive. AI-enabled continuous red-teaming, automated threat hunting, and predictive intelligence must become standard practice. As Anthropic themselves recommended, security teams should experiment with applying AI for defence and build experience with what works in their specific environments.

Every month a decision is postponed accumulates risk that compounds. The cost of inaction in 2026 is asymmetrically high.

Third, international legal and normative frameworks must be urgently updated. The Tallinn Manual, the UN Charter’s non-intervention principle, and existing cybercrime conventions are grossly insufficient for autonomous agents conducting operations across borders at scale. As Wan Rosli documented, even the fundamental question of whether cyber espionage constitutes an international crime remains unresolved.

Fourth, the relationship between AI developers and national security institutions must be recalibrated. Anthropic used Claude extensively in analysing the enormous amounts of data generated during its own investigation — a striking demonstration of the dual-use nature of the technology.

The question Anthropic itself posed is the right one: if AI models can be misused for cyberattacks at this scale, why continue to develop and release them?

Their answer was that the very abilities that allow Claude to be used in these attacks also make it crucial for cyber defence.

That duality cannot be wished away.

It must be governed.

Fifth, the upstream threat vector demands a fundamentally different security architecture. Platform-hardening and behavioural detection, while necessary, are insufficient against model-centric attacks that shape AI behaviour before deployment. Content provenance, algorithmic transparency, and training-data integrity must become central concerns, not afterthoughts.

Finally, and most fundamentally, societies must grapple with the reality that artificial intelligence has introduced a form of agency into statecraft that is qualitatively different from any tool that preceded it.

The machines are not faster versions of human operators.

They introduce autonomous decision-making into domains — espionage, conflict, critical infrastructure — where the consequences of error are catastrophic and irreversible.

The Emergent Intelligence Framework’s insistence on human oversight, ethical governance, and strategic final decision-making is not a bureaucratic constraint.

It is a moral imperative.

In the closing passages of their Survival article, Rohozinski and Spirito wrote that the Anthropic case should be understood not as an isolated event but as a preview of coming attractions — not just about AI security but about the pace of technological change.

New tools are being applied to old problems, but new problems are also emerging from the tools themselves.

They are challenging the distinction between defender and attacker, authorised and unauthorised use, human and artificial decision-making — the conceptual dichotomies that underpin contemporary cybersecurity.

The OpenAI paper drew on historical parallels with the post-Second World War period, when intensifying competition and powerful new technologies drove a reimagining of national and international security. That earlier transformation required decades of work, the creation of new disciplines, the promulgation of new policies and doctrines, and broad collaboration between political leadership and technical experts.

With AI, they warned, there may not be decades available.

By the end of this decade, the competitive landscape will have been substantially determined.

That assessment is not a counsel of despair. It is a statement of the problem’s scale and urgency.

The intelligence communities, governments, technology companies, and international institutions that must navigate this landscape possess extraordinary resources — intellectual, technological, financial, and diplomatic.

What they lack, for now, is a shared understanding of the threat sufficient to overcome institutional inertia, strategic short-termism, and the natural human reluctance to confront transformations that unsettle established assumptions about how the world works.

The ghost is in the machine.

Whether it serves or subverts the interests of democratic societies will depend, in the end, not on the capabilities of the technology but on the choices of the humans who build, deploy, regulate, and defend against it.

Those choices must be made now.

The machines will not wait.